Changhao Chen

The Hong Kong University of Science and Technology, Guangzhou, China

I am a Tenure-Track Assistant Professor, at the Intelligent Transportation Thrust and Artificial Intelligence Thrust, The Hong Kong University of Science and Technology (Guangzhou), China. I am also affiliated with the Division of Emerging Interdisciplinary Areas (EMIA) at HKUST’s Clear Water Bay campus. Before that, I was a lecturer (2021-2024) at National University of Defense Technology, China, and a postdoctoral researcher (2020) at Department of Computer Science, University of Oxford. I received Ph.D. in Computer Science (2016-2020) from University of Oxford, supevised by Prof. Niki Trigoni and Prof. Andrew Markham, Master in Engineering (2014-2016) from National University of Defense Technology, and Bachelor in Engineering (2010-2014) from the Tongji University, China.

I lead the HKUST-GZ PEAK Lab (Perception, Embodiment, Autonomy and Kinematics), where our research focuses on Embodied AI and Autonomous Systems, particularly the challenges of Open-World Robotic Perception, Navigation and Interaction. Traditional robotic algorithms often depend on meticulously crafted geometric and dynamic models, which may struggle to adapt to ever-changing, complex environments. Our research demonstrates that developing learning solutions over these static models enables autonomous systems to achieve independent motion estimation, robust spatial scene perception, and reliable, safe navigation. Our work involves a combination of novel algorithms and methods (including learning and statistics, signal processing, optimization, geometry, and dynamics modelling) and system implementations (including sensor fusion, hardware-software codesign, computing architecture). Our research outcomes have been successfully applied to a diverse range of platforms, from robots, drones, self-driving vehicles to smartphone, smartwatches, and VR/AR devices, supporting their real-world applications in intelligent transportation, emergency rescue and hospital efficiency enhancement.

Our major contributions have been in the following research directions:

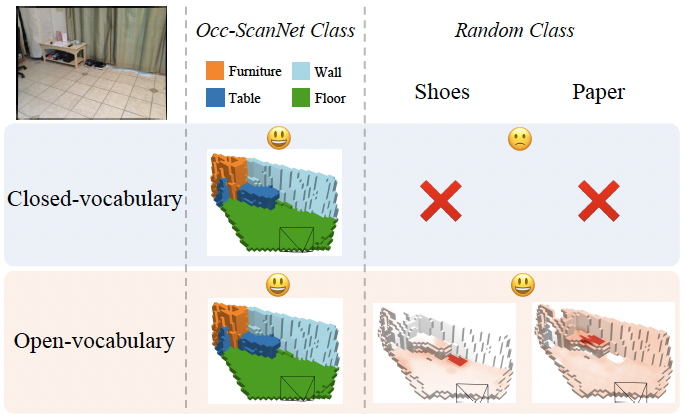

Perception — Open-World Spatial Understanding: We develop principled learning-based and geometric methods for open-vocabulary 3D occupancy prediction, self-supervised SLAM, and city-scale neural localization and rendering, enabling robots to perceive and reason about unbounded, dynamic environments.

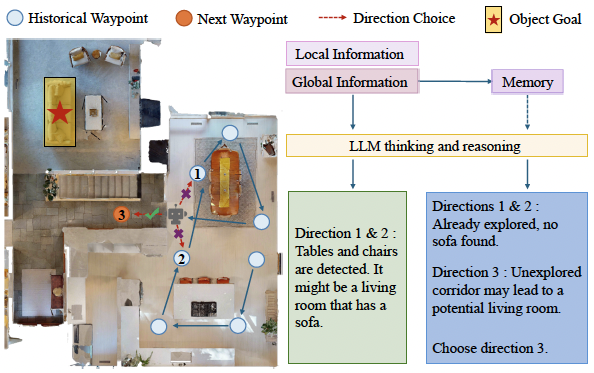

Embodiment — Learning to Act: We explore vision–language–action (VLA) models and world models for scalable robot policy learning, as well as LLM-driven planning and decision-making for complex, long-horizon mobile manipulation tasks.

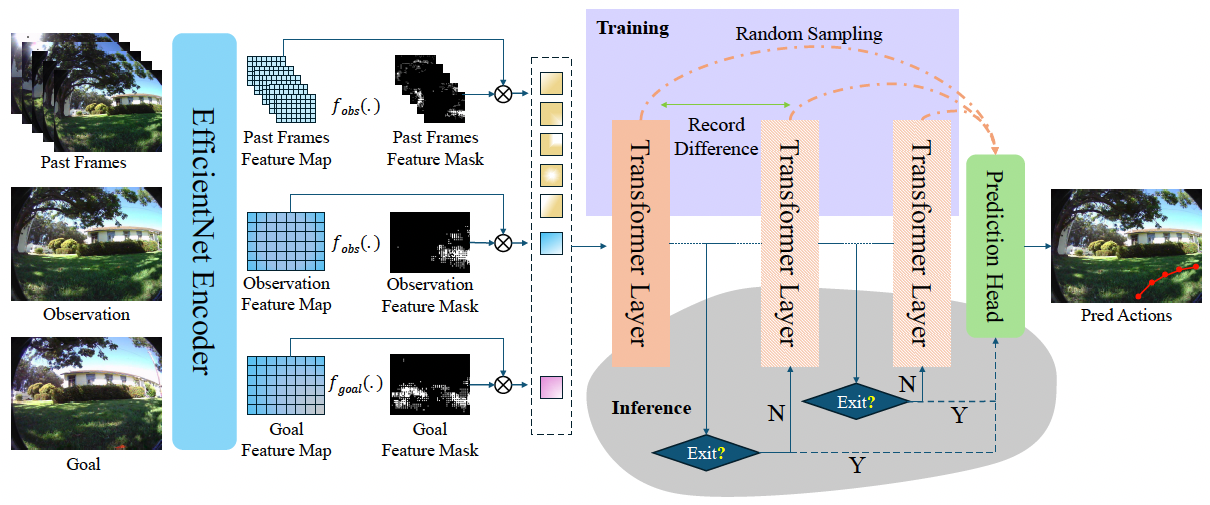

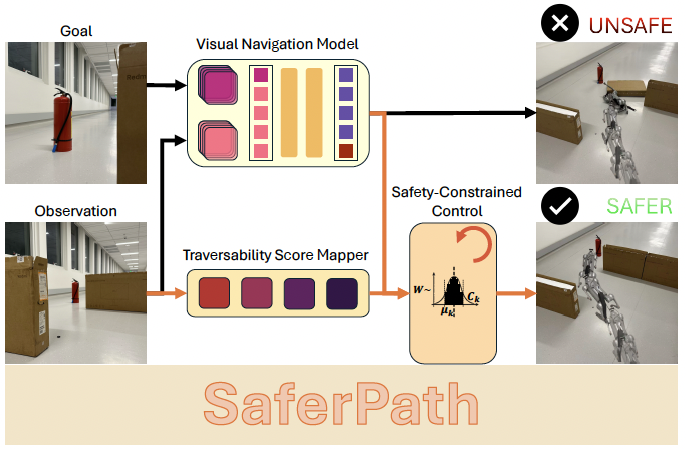

Autonomy — Safe and Agile Control: We advance stability-constrained dynamical models and efficient policy learning frameworks to achieve safe, reliable navigation and agile locomotion in real-world settings.

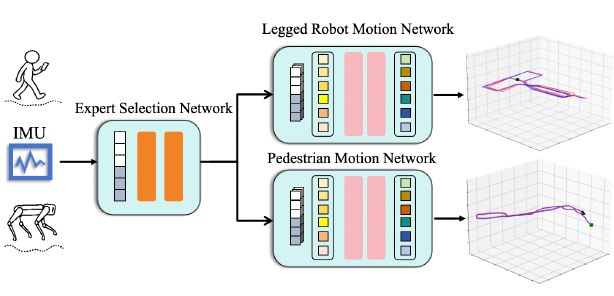

Kinematics — Robust State Estimation: We pioneer deep learning-based inertial motion tracking and task-driven multimodal fusion systems that deliver accurate and robust state estimation under diverse and challenging conditions.

news

| Aug 17, 2025 | We have several open positions for Spring/Fall 2026, including full-funded Ph.D. scholarships, and openings for Research Assistants, and Visiting Students! If you want to join PEAK-Lab, please read here carefully. |

|---|

selected publications

-

Monocular Open Vocabulary Occupancy Prediction for Indoor ScenesIn The IEEE/CVF Conference on Computer Vision & Pattern Recognition (CVPR-2026), Oral Presentation, Award Candicate Paper, 2026

Monocular Open Vocabulary Occupancy Prediction for Indoor ScenesIn The IEEE/CVF Conference on Computer Vision & Pattern Recognition (CVPR-2026), Oral Presentation, Award Candicate Paper, 2026 -

SaferPath: Hierarchical Visual Navigation with Learned Guidance and Safety-Constrained ControlIn The IEEE International Conference on Robotics and Automation (ICRA-2026), 2026

SaferPath: Hierarchical Visual Navigation with Learned Guidance and Safety-Constrained ControlIn The IEEE International Conference on Robotics and Automation (ICRA-2026), 2026 -

X-IONet: Cross-Platform Inertial Odometry Network for Pedestrian and Legged RobotThe IEEE Robotics and Automation Letters (RA-L), 2026

X-IONet: Cross-Platform Inertial Odometry Network for Pedestrian and Legged RobotThe IEEE Robotics and Automation Letters (RA-L), 2026 -

-